Proven Tactics to Drive Traffic to Your Website

Simple Traffic Solutions

This product is actually a course on how to generate and boost traffic to your business. It was created by John Thornhill, a very successful online marketing expert who had discovered ways of getting billions of visitors to his sites. This book stands out from others in its category in that none of the methods given in it talks about advertising, Google, or SEO optimization or pay per click (PPC) marketing. The author actually shifted from what is normally obtainable and came up with a new, simple but yet effective method on how to achieve traffic boost without involving Google. 50 modules in this training explain explicitly what traffic is and how to generate them. Contained in each module are traffic theories, doable practicals, and also a checklist that serve as step-by-step guides through each method. The product also contains tutorial videos that showcase 19 effective tactics for traffic generation. These tutorial videos are divided into three phases for better assimilation. A buyer gets the three phases with just one purchase. Other aspects highlighted extensively in this product are Ad Swapping, Article Marketing, Blogging, Blog Hopping and Guest Blogging, Email Marketing, Facebook Groups and Forums, Free Reports, Integration Marketing, Joint Ventures (JVs), Link Exchanges, Search Engine Optimization (SEO), Signatures, Social Media Networking, Video Marketing, Viral Marketing, and so much more. More here...

Contents: Video And PDFs

Author: John Thornhill

Official Website: simpletrafficsolutions.com

Price: $47.00

Simple Traffic Solutions

I started using this book straight away after buying it. This is a guide like no other; it is friendly, direct and full of proven practical tips to develop your skills.

If you want to purchase this book, you are just a click away. Click below and buy Simple Traffic Solutions for a reduced price without any waste of time.

Read full review...Heroo AutoPilot Traffic From 700 Sources

More Information

Making money on the internet has become the best way for many people today to earn passive income. But this is a highly competitive field that requires newcomers to try something new or do something extraordinary. But now, there is a product that claims to help you generate unlimited traffic from 700 sources with just a few clicks. This product is called Heroo AutoPilot, a cloud-based program that can generate traffic from different sources in just 60 seconds. All you need to do is add a link and click send. Since the sales page doesn't specify where the program is sending this unlimited traffic from, some users are very skeptical that this traffic could be nothing but bots. Heroo is the only 20-in-1 platform that integrates email, SMS, social media, and messenger in just one click to receive unlimited traffic. When you sign up for this software, you will receive step by step guide to help fast-track your learning. Moreover, you also receive five premium bonuses that would otherwise cost you thousands of dollars. The 700 sources have a combined 5 billion users who are actively waiting to buy and this software lets you get in front of them. You don't need any tech skills, experience, or a budget to start using Heroo AutoPilot. More here...

Heroo AutoPilot Traffic From 700 Sources Summary

Contents: Free Traffic

Official Website: getheroo.com

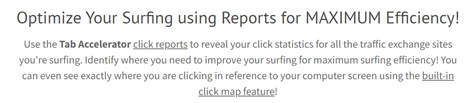

Tab Accelerator

More Information

Dave developed the traffic exchange system to improve your surfing credit efficiency. The software is not a bot and doesn't automate clicking in any possible way. It is even highly recommended not to do that. If you get caught in the network's doing that, they'll terminate your account. And in addition, a lot of network owners know each other. So if you get banned in one place, you're going to get banned and all the other networks. And if you've done some traffic exchange stuff before, you already know that and are aware that bots are not a good way to get clicks. However, there's another way to get more surfing credits. You can get a ton of traffic to your offers and sites, or your affiliate offers using the Tab Accelerator software. The tab accelerator makes it very efficient for you to go quickly through all of your traffic exchange sites. What the tab accelerate software does is instead of using a hotkey, it does use your left mouse button. So when you click on the small images on the software to earn your credit, it goes to the next tab. And that's why we call it the Tab accelerator. This helps you go through the tabs quickly. More here...

Read full review...

Traffic Sniper AI Traffic App

More Information

Traffic Sniper is a traffic app that uses Artificial Intelligence (AI) to manage your marketing activities on multiple sites and boost your site traffic. It ensures you get quality traffic that helps you get more sales, leads, and revenue. It's a cloud-based software that works on most devices, so you can manage your website traffic wherever you go. It allows you to boost your traffic by analyzing data using advanced algorithms. This will enable you to get real-time analysis on every website that you want to manage. It helps you improve your search engine optimization (SEO) and rankings and gives you real-time visibility on your sites. The brain behind Traffic Sniper App is Ian Ross. Ian is a mathematician and AI developer with years of experience in data analysis systems. He has developed an outstanding reputation for developing algorithms that help companies optimize their search engine rankings. Based on Ian's experience as an AI expert, he wanted to create a traffic optimization system that would help marketers get better results for their online business endeavors. Traffic Sniper is a form of cloud-based software designed to help every e-marketing business grow and increase sales. You will make the required payments on the official website and receive access to the software. More here...

Traffic Sniper AI Traffic App Summary

Contents: Free Traffic Software

Creator: Ian Ross

Official Website: grabsniper.net

Iptables NAT Semantics

Masquerading sits on top of forwarding as a separate kernel service. Traffic is masqueraded in both directions, but not symmetrically. Masquerading is unidirectional. Only outgoing connections can be initiated. As traffic from local machines passes through the firewall to a remote location, the internal machine's IP address and source port are replaced with the address of the firewall machine's external network interface and a free source port on the interface. The process is reversed for incoming responses. Before the packet is forwarded to the internal machine, the firewall's destination IP address and port are replaced with the real IP address and port of the internal machine participating in the connection. The firewall machine's port determines whether incoming traffic, all of which is addressed to the firewall machine, is destined to the firewall machine itself or to a particular local host.

Incoming TCP Connection State Filtering

Incoming TCP packet acceptance rules can make use of the connection state flags associated with TCP connections. All TCP connections adhere to the same set of connection states. These states differ between client and server because of the three-way handshake during connection establishment. As such, the firewall can distinguish between incoming traffic from remote clients and incoming traffic from remote servers.

Installing and Configuring a Firewall

The gufw tool provides several tabs that make it easy for you to create various types of rules without having to understand the syntax of the kernels iptables rules. Once you enable a firewall, all incoming traffic is disabled by default, which is secure but probably not what you want if you plan to support network services such as incoming SSH connections, incoming FTP, and so on. Note that you do not need to select the Allow incoming radio button shown in Figure 25-27 to enable incoming traffic. The radio buttons at the top left of the gufw dialog identify the default behavior of your system. Any rules that you subsequently define represent exceptions to that rule. For example, to quickly create a rule that allows incoming SSH connections, make sure that the Simple tab is selected in the Add a new rule section, enter ssh in the text entry field for this rule, and make sure that you are allowing traffic in both directions, as shown in Figure 25-28.

Httpinspect a preprocessor for HTTP

We recommend installing and using the http_inspect preprocessor. Using http_inspect normalizes all packets containing different forms of HTTP communication into a state that Snort can easily compare and scan through its rules. A huge amount of Web traffic crosses the Net, and many attacks rely on the HTTP protocol as their transmission medium. To configure your Snort system so that it normalizes Web traffic, you need to put a few lines in your snort.conf configuration file that look something like the following

Watching Your Web Server Traffic with apachetop

With apachetop, you can see the host machine that your visitors are using, determine whether they came by way of a search engine, and find out what pages they visited while they were there. You can also use apachetop to see if your visitors are repeatedly asking for documents that don't exist. You may want to rename your Web pages if the same page name is mistyped over and over.

Tutorial Interception Proxying

Ordinarily, when using Squid on a network to cache Web traffic, browsers must be configured to use the Squid system as a proxy. This type of configuration is known as traditional proxying. In many environments, this is simply not an acceptable method of implementation. Therefore Squid provides a method to operate as an interception proxy, or transparently, which means users do not even need to be aware that a proxy is in place. Web traffic is redirected from port 80 to the port where Squid resides, and Squid acts like a standard Web server for the browser. Using Squid transparently is a two part process, requiring first that Squid be configured properly to accept non-proxy requests, and second that Web traffic gets redirected to the Squid port. The first part of configuration is performed in the Squid module, while the second part can be performed in the Linux Firewall module.

Understanding and controlling network traffic flow

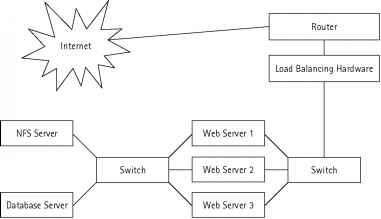

Here three Web servers are providing Web services to the Internet and they share a network with an NFS server and a database server. What's wrong with this picture Well, several things are wrong. First of all, these machines are still using dumb hub instead of a switch. Second of all, the NFS and database traffic is competing with the incoming and outgoing Web traffic. If a Web application needs database access, it generates database requests, in response to a Web request from the Internet, which in turn reduces from the bandwidth available for other incoming or outgoing Web requests, thus, effectively making the network unnecessarily busy or less responsive. How can you solve such a problem Using a traffic control mechanism, of course First determine what traffic can be isolated in this network. Naturally, the database and NFS traffic is only needed to service the Web servers. In such a case, NFS and database traffic should be isolated so that they don't compete with Web traffic.

Using mixmaster to send anonymous email

Mixmaster is described by the debian package system as Mixmaster is the reference implementation of the type II remailer protocol which is also called Mixmaster.An anonymous remailer is a computer service that privatizes your email. A remailer allows you to send electronic mail to a Usenet news group or to a person without the recipient knowing your name or your email address. Anonymous remailers provide protection against traffic analysis.This package provides both a client and an optional server installation. First we'll install the mixmaster package The menus are simple. Merely press the first letter of whichever command you want to execute. Let's put a dummy message into the pool by pressing d . Dummy messages provide protection against traffic analysis. You should see something similar to the following but with a different chain

Using the haproxy load-balancer for increased availability

HAProxy is a TCP HTTP load-balancer, allowing you to route incoming traffic destined for one address to a number of different back-ends. The routing is very flexible and it can be a useful component of a high-availability setup. The general usecase for a load-balancer is to present a service on a network which is actually fulfilled by a number of different back-end hosts. Incoming traffic is accepted upon a single IP address, and then sent to actually be fulfilled by one of a number of back-end hosts.

Scalable Public Key Infrastructure for both OpenSWAN and OpenVPN

OpenSWAN also implements several other nice features such as MTU control, changing the source address of the connection (so that a multihomed host can use the correct IP when sending traffic over a tunnel which is useful on a gateway), and many others. However, it is not always trivial to make IPSec interoperability actually work. Vendors are free to implement various subsets of the extensions, and there are many parts of the RFCs that are 'may's or 'might's that are not universally implemented. Not all clients support the IPSec extensions that OpenSWAN does. Moreover, OpenSWAN uses a highly baroque configuration syntax, some features are poorly documented, and it sports cryptic error and debug messages (in debugging something simple like client authentication, you typically have to wade through hundreds of lines of messages largely devoted to OpenSWAN internals).

Producing and using website statistics

Similar simple statistics can be achieved from the command line, such as showing the number of unique visitors to your site You can see a sample of the output which it produces by looking at the online Awstats sample page - this shows you the unique visitors per month, top search requests which users used to find your site, and other information. The default output of the webalizer script can be seen in the sample reports which are available here on the webalizer site, and contain information about the number of unique visitors per month, the most popular directories and the popular files.

Searching MySQL databases with fulltext indexes

By default the indexes you create will only include words of between four and twenty characters in length, this is something that you can adjust if you need to. You should try to think of the search terms your visitors are liable to use and adjust your limitations accordingly. A mimimum search term of four-letters would not allow visitors to search for such terms as SSH , or CVS which would be a problem upon sites like this

Spam filtering with qpsmtpd

By default the file installed will be pretty minimally configured - with most of the checks disabled. To start adding new tests to your server you will need to adjust the plugins file. As an example you can drop clients which start sending traffic to your server without waiting for the prompt to be sent, this can be done by enabling the earlytalker plugin

Joining disparate hosts into a VPN with gvpe

If you assign each host a private address and configure them all to be in a VPN then traffic between them will be encrypted. That means even if your hosting company were to sniff your traffic they wouldn't ever see your database queries over the wire. (Of course if you don't trust your hosting company you've got bigger problems they could clone your disks, subvert your hardware, or worse.)

Creating a Wiki with kwiki

In order for your visitors to be able to actually modify the page contents you must allow the Apache process to write to the various files within the installation. Once you've done that you, and your visitors, may be able to edit the text of any page. The pages themselves are stored beneath the database directory - with the editing information stored beneath metabase metadata directory.

Attention Advertisers

Google Analytics Reporting Suite If you look at Google Analytics information very often, this application will save you time. Its functionality isn't much greater than visiting Google's Web site, but the speed and convenience is nice. Google Analytics Reporting Suite works under Linux AIR 1.1 Beta yes. Figure 7. Browsing through Google Analytics information is simple with this application. Figure 7. Browsing through Google Analytics information is simple with this application. Google Analytics Reporting Suite Available on the Adobe AIR Marketplace (see above)

Introducing Squid

Standard proxy server Basically, you can use Squid as a proxy cache. This serves two goals it makes transmitting traffic to and from the Internet faster, and it adds security. In this role, Squid is a proxy that sits between a user and the Internet. The user sends all HTTP requests to the proxy and not directly to the Internet. The computers of the users do not even need a direct connection to the Internet to generate web traffic. The proxy handles all the traffic for the user and fetches the required data from the Internet. Then the proxy caches this data locally. The next time another user needs the same data, they don't need to get it from the Internet, but it can be provided from the cache that is maintained by the proxy. Used in this way, the main advantage is that the Squid proxy increases speed for clients that need to get data from the Internet. This chapter describes how you can use Squid in this way.

Djbdns

Let's face it, DNS is not the most sexy component of the Internet's infrastructure. It is an old technology and doesn't get the same attention as newer, more flashy tools and software. Your Web site visitors may comment on how cool your new AJAX widget is, but I guarantee they will never tell the world how pleased they are with your DNS response time.

Mick Bauer

But the fact is, organizations have the right to manage their bandwidth and other computing resources as they see fit (provided they're honest with their members employees about privacy expectations), and security professionals are frequently in the best position to know what's going on. Firewalls and Web proxies typically comprise the most convenient choke points for monitoring or filtering Web traffic.

More Products

| Traffic Bots 10 Affiliate Tools | trafficautobot.com |

| Traffic Ivy | trafficivy.com |

| Trafficzion Method | www.trafficzionmethod.com |

| Ad Trackz Gold Ad Tracking And Link Cloaking Wordpress Plugin |