Idle and init Thread

The last two actions of start_kernel are as follows:

1. rest_init starts a new thread that, after performing a few more initialization operations as described the next step, ultimately calls the userspace initialization program /sbin/init.

2. The first, and formerly only, kernel thread becomes the idle thread that is called when the system has nothing else to do.

rest_init is essentially implemented in just a few lines of code: init/main.c static void rest_init(void) {

kernel_thread(kernel_init, NULL, CLONE_FS | CLONE_SIGHAND); pid = kernel_thread(kthreadd, NULL, CLONE_FS | CLONE_FILES); kthreadd_task = find_task_by_pid(pid); unlock_kernel();

Once a new kernel thread named init (which will start the init task) and another thread named kthreadd (which will be used by the kernel to start kernel daemons) have been started, the kernel invokes unlock_kernel to unlock the big kernel lock and makes the existing thread the idle thread by calling cpu_idle. Prior to this, schedule must be called at least once to activate the other thread.

The idle thread uses as little system power as possible (this is very important in embedded systems) and relinquishes the CPU to runnable processes as quickly as possible. In addition, it handles turning off the periodic tick completely if the CPU is idle and the kernel is compiled with support for dynamic ticks as discussed in Chapter 15.

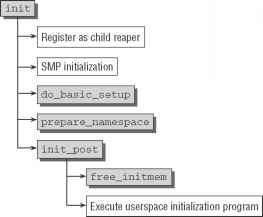

The init thread, whose code flow diagram is shown in Figure D-3, is in parallel existence with the idle thread and kthreadd.

First, the current task needs to be registered as child_reaper for the global PID namespace. The kernel makes it very clear what its intentions are:

init/main.c static int _init kernel_init(void * unused)

* Tell the world that we're going to be the grim

* reaper of innocent orphaned children. */

init_pid_ns.child_reaper = current;

- Figure D-3: Code flow diagram for init.

Up to now, the kernel has used just one of the several CPUs on multiprocessor systems, so it's time to activate the others. This is done in the following three steps:

1. smp_prepare_cpus ensures that the remaining CPUs are activated by executing their architecture-specific boot sequences. However, the CPUs are not yet linked into the kernel scheduling mechanism and are therefore still not available for use.

2. do_pre_smp_initcalls is — despite its name — a mix of symmetric multiprocessing and uniprocessor initialization routines. On SMP systems, its primary task is to initialize the migration queue used to move processes between CPUs as discussed in Chapter 2. It also starts the softIRQ daemons.3

3. smp_init enables the remaining CPUs in the kernel so that they are available for use.

Driver Setup

The next init step is to start general initialization of drivers and subsystems using the do_basic_setup function whose code flow diagram is shown in Figure D-4.

Some of the functions are quite extensive but not very interesting. They simply initialize further kernel data structures already discussed in the chapters on the specific subsystems. driver_init sets up the data structures of the general driver model, and init irq proc registers entries with information about IRQs

3 To be precise, the kernel invokes a callback function that starts the daemons when a CPU is activated by the kernel. Suffice it to say that ultimately an instance of the daemon is started for each CPU.

in the proc filesystem. init_workqueues generates the events work queue, and usermodehelper_init creates the khelper work queue.

do_basic_setup

|

init_workqueues 1 | |

|

usermodehelper_init | |

|

driver_init | |

|

init irq proc 1 | |

|

do_initcall^J | |

Figure D-4: Code flow diagram for do_basic_setup.

Much more interesting is do_initcalls, which is responsible for invoking the driver-specific initialization functions. Because the kernel can be custom configured, a facility must be provided to determine the functions to be invoked and to define the sequence in which they are executed. This facility is known as the initcall mechanism and is discussed in detail later in this section.

The kernel defines the following macros to detect the initialization routines and to define their sequence or priority:

#define _define_initcall(level,fn,id) \

static initcall_t _initcall_##fn##id

_attribute_((_section_(".initcall"

_attribute_used_ \

#define pure_initcall(fn)

_define_initcall("0",fn,0)

#define core_initcall(fn) _define_initcall(

#define postcore_initcall(fn) _define_initcall(

#define arch_initcall(fn) _define_initcall(

#define subsys_initcall(fn) _define_initcall(

#define fs_initcall(fn) _define_initcall(

#define rootfs_initcall(fn) _define_initcall(

#define device_initcall(fn) _define_initcall(

#define late_initcall(fn) _define_initcall(

1",fn,1) 2",fn,2) 3",fn,3) 4",fn,4) 5",fn,5)

rootfs",fn,rootfs)

The names of the functions are passed to the macros as parameters, as shown in the examples for device_initcall(time_init_device) and subsys_initcall(pcibios_init). This generates an entry in the .initcalllevel .init section. The initcall_t entry type is used and is defined as follows:

typedef int (*initcall_t)(void);

This is a pointer to functions that do not expect an argument and return an integer to indicate their status.

The linker places initcall sections one after the other in the correct sequence in the binary file. The order is defined in the architecture-independent file <include/asm-generic/vmlinux.lds.h>, as shown here:

<asm-generic/vmlinux.lds.h>

#define INITCALLS \

*(.initcallrootfs.init) \

This is how a linker file employs the specification (the linker script for Alpha processors is shown here, but the procedure is practically the same on all other systems):

arch/alpha/kernel/vmNnux.lds.S

INITCALLS

The linker holds the start and end of the initcall range in the_initcall_start and_initcall_end variables, which are visible in the kernel and whose benefits are described shortly.

The mechanism described defines only the call sequence of the different initcall categories. The call sequence of the functions in the individual categories is defined implicitly by the position of the specified binary file in the link process and cannot be modified manually from within the C code.

Because the compiler and linker do the preliminary work, the task of do_initcalls is not all that complicated as the following glance at the kernel sources shows:

init/main.c static void _init do_initcalls(void)

initcall_t *call;

int count = preempt_count();

for (call = _initcall_start; call < _initcall_end; call++) {

char *msg; int result;

if (initcall_debug) {

printk("calling initcall 0x%p\n", *call);

/* Make sure there is no pending stuff from the initcall sequence */ flush_scheduled_work();

Basically, the code iterates through all entries in the .initcall section whose boundaries are indicated by the variables defined automatically by the linker. The addresses of the functions are extracted, and the functions are invoked. Once all initcalls have been executed, the kernel uses flush_scheduled_work to flush any remaining keventd work queue entries that may have been created by the routines.

Removing Initialization Data

Functions to initialize data structures and devices are normally needed only when the kernel is booted and are never invoked again. To indicate this explicitly, the kernel defines the_init attribute, which is prefixed to the function declaration as shown previously in the kernel source sections. The attribute is defined as follows:

#define _init _attribute_ ((_section_ (".init.text"))) _cold

#define _initdata _attribute_ ((_section_ (".init.data")))

The kernel also enables data to be declared as initialization data by means of the __initdata attribute.

The linker writes functions labeled with_init or_initdata to a specific section of the binary file as follows (linker scripts on other architectures are almost identical to the Alpha version shown here):

arch/alpha/kernel/vmlinux.lds.S

/* Will be freed after init */ . = ALIGN(PAGE_SIZE); /* Init code and data */ __init_begin = .; .init.text : {

INITCALLS

A few other sections are also added to the initialization section that includes, for example, the initcalls discussed previously. However, for the sake of clarity, this appendix does not describe all data and function types removed from memory by the kernel on completion of booting.

free_initmem is one of the last actions invoked by init to free kernel memory between_init_begin and_init_end. The value of the variable is set automatically by the linker as follows:

arch/i386/mm/init.c void free_init_pages(char *what, unsigned long begin, unsigned long end) {

unsigned long addr;

for (addr = begin; addr < end; addr += PAGE_SIZE) { ClearPageReserved(virt_to_page(addr)); init_page_count(virt_to_page(addr));

memset((void *)addr, POISON_FREE_INITMEM, PAGE_SIZE);

free_page(addr);

totalram_pages++;

printk(KERN_INFO "Freeing %s: %luk freed\n", what, (end - begin) >> 10);

void free_initmem(void) {

free_init_pages("unused kernel memory",

(unsigned long)(&_init_begin),

Although this is an architecture-specific function, its definition is virtually identical on all supported architectures. For brevity's sake, only the IA-32 version is described. The code iterates through the individual pages reserved by the initialization data and returns them to the buddy system using free_page. A message is then output indicating how much memory was freed, usually around 200 KiB.

Starting Userspace Initialization

As its final action, init invokes init_post — which, in turn, launches a program that continues initialization in userspace in order to provide users with a system on which they can work. Under Unix and Linux, this task is traditionally delegated to /sbin/init. If this program is not available, the kernel tries a number of alternatives. The name of an alternative program can be passed to the kernel by init=program in the command line. An attempt is then made to start this program before the default options (the name is held in execute_command when the command line is parsed). If none of the options functions, a kernel panic is triggered because the system is unusable, as shown here:

init/main.c static int noinline init_post(void) {

if (execute_command) {

run_init_process(execute_command);

printk(KERN_WARNING "Failed to execute %s. Attempting " "defaults...\n", execute_command);

run_init_process("/sbin/init"); run_init_process("/etc/init"); run_init_process("/bin/init"); run_init_process("/bin/sh");

panic("No init found. Try passing init= option to kernel.");

run_init_post sets up a minimal environment for the init process as follows: init/main.c static char * argv_init[MAX_INIT_ARGS+2] = { "init", NULL, };

char * envp_init[MAX_INIT_ENVS+2] = { "HOME=/", "TERM=linux", NULL, };

static void run_init_process(char *init_filename) {

argv_init[0] = init_filename;

kernel_execve(init_filename, argv_init, envp_init);

kernel_execve is a wrapper for the sys_execve system call, which must be provided by each architecture.

Continue reading here: D3 Summary

Was this article helpful?